Comet’s Year-in-Review: 2021

It’s hard to believe that, in a couple of days, we’ll be exiting 2021 and flipping our collective calendars to another new year.

The year’s end is always a wonderful time to both reflect on what has transpired as well as peek around the corner and envision what’s coming in the future. Our team at Comet wanted to take a bit of time to recap and appreciate all the new developments over the course of 2021—and how you and the Comet community have made such a wonderful and exciting year possible.

We couldn’t be more thrilled about what’s to come in 2022 and beyond—but before we embark on new adventures, we wanted to pause for a moment and reminisce on a truly special year for the entire Comet team.

Wishing you all the happiest of holidays and an inspiring start to the new year!

The Comet Team

2021 was a year of tremendous growth for Comet in numerous ways, but perhaps none more striking than the rapid growth of our world-class team.

We’ve roughly tripled in team size, opened a new office in Tel Aviv, and have welcomed many amazingly talented folks to our team, across engineering, product, marketing, sales, growth, and more!

But we’re only getting started!

If finding a new, exciting role is on your radar for 2022, then look no further. We’re currently hiring across departments and teams—and we’re always open to working with talented, motivated people from all around the world.

Company Growth

Above, I alluded to Comet’s success in the ML market as a primary driver of the parallel growth in our team size and diversity.

We were thrilled to raise both Series A and Series B rounds of funding—totaling more than $60 million USD—only 6 months apart and on the back of 5x growth in annual recurring revenue.

This rapid growth helped us land on several “ML Startups to Watch” lists—like this one from CRN and this one from Analytics Insight. But accolades and specific numbers aside, our team has been energized by this traction and the concurrent reception from the broader ML community.

It’s truly humbling to witness and participate in this rapid growth, and it only steels our resolve to become the de facto AI development platform for enterprises and practitioners alike.

Product Developments

Underscoring the amazing achievements outlined above has been the rapid development and improvement of the Comet platform. We’ve been blown away by all the creative and groundbreaking ways our community has been using our platform—from our experiment tracking and management tools to our newly-available tools for monitoring model performance in production.

Here’s a quick rundown of what we built in 2021:

MPM: Model Production Monitoring

As we continued to improve and expand upon our powerful tools for experiment tracking, logging, and visualization, we came to realize that in order to build a more complete ML experimentation platform, we’d need to address the elephant in the enterprise ML room—the problem of monitoring—and fixing—models that start to drift or perform poorly once in production.

This realization led us to develop and launch a new component of our tool stack—Model Production Monitoring (MPM). This new component of the Comet platform allows practitioners and teams to better understand the relationships between model performance across the ML lifecycle—effectively closing the gap between model training and performance in production

To learn more about the what and why of MPM, check out the following resources:

Comet Artifacts

While managing and tracking your team’s ML experiments, understanding data sources and their lineage—both in terms of inputs and outputs—is crucial for projects that require multi-stage pipelines, or use cases where the output data of one model training run serves as the input data for a new training run.

We built Comet Artifacts to solve these data lineage and usability challenges. Put concisely, Artifacts provides a convenient way to log, version, and browse data from all parts of an experimentation pipeline.

To dive deeper into Artifacts, take a closer look at the following resources

- Our announcement blog post

- An end-to-end tutorial and Colab Notebook example

- The official documentation

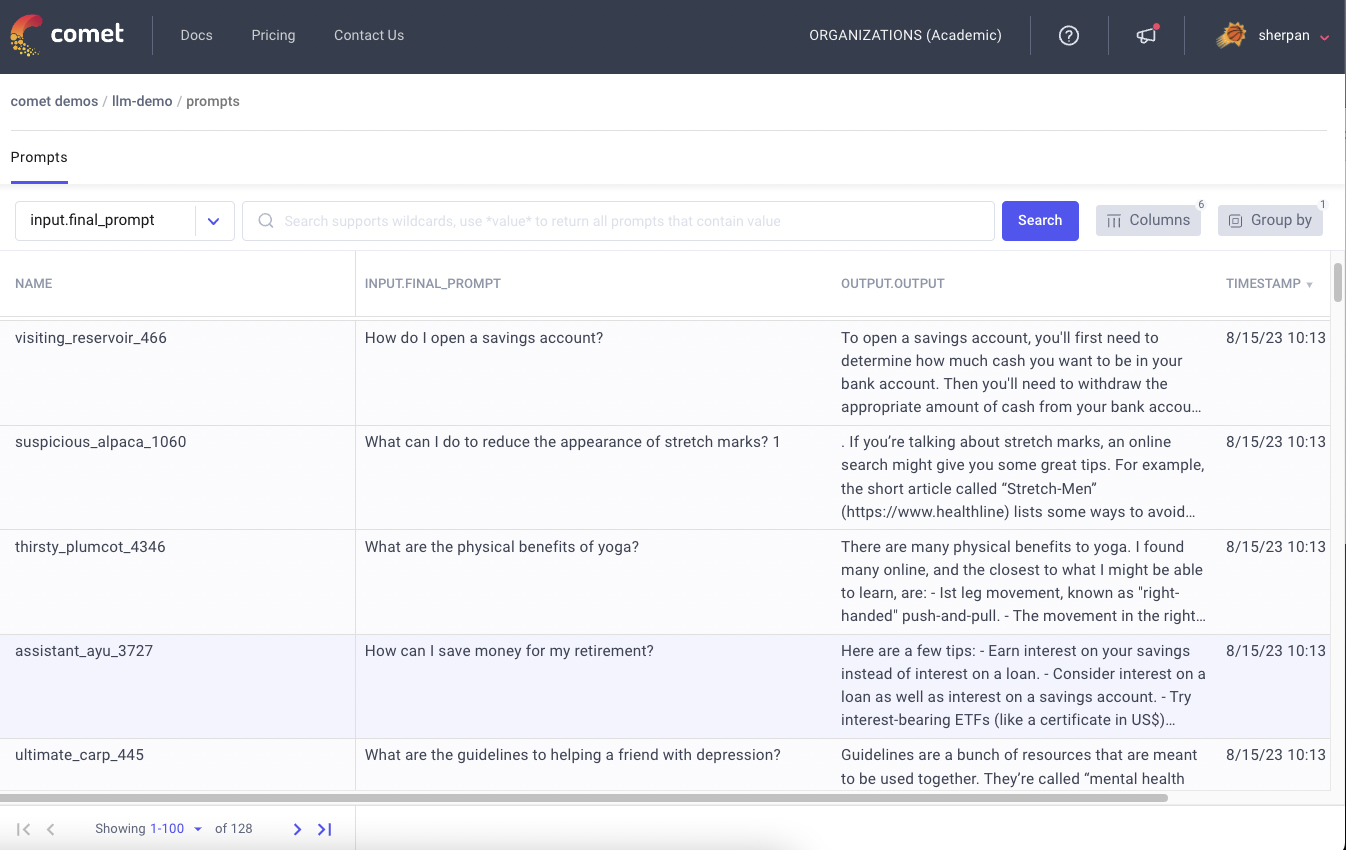

Revamped UI and Python Panels

In addition to the two brand new product features, our team also worked on major iterations of some of Comet’s existing features—specifically, a revamp of the UI and the introduction of completely custom Python visualizations rendered in the browser.

The UI revamp was designed to improve and streamline both the “Experiments” and “Tables” pages—enabling better connections between model training experiments and their resulting Panels (i.e. visualizations). Here are a couple highlights:

- More intuitive split between Panels and the experiments table

- Streamlined dashboard allowing more Panels to be displayed at once

- The ability to compare experiments more easily

Alongside the new UI, we also launched a Beta version of “Python Panels”, which allow you to write custom visualizations the same way that you write all of your other scripts—using the Python modules that you know and love.

Previously, custom code Panels were only possible in JavaScript, a less popular language among ML practitioners. So we’re excited about the possibilities of this new way to visualize experiment results, and we hope you’ll help us further test and explore this feature in 2022.

Integrations and Partnerships

To truly become the de facto AI development platform, we also realize Comet needs to play nicely with the tool stacks practitioners and enterprise teams already work with.

That’s why, in addition to our core ML library integrations (i.e. PyTorch, TensorFlow, Scikit Learn), we invested heavily in integrations and partnerships in 2021 that extend Comet’s functionality and versatility.

Here’s a quick rundown of our integration and partnership work in 2021, with links to tutorials, notebooks, and more information:

TensorboardX

Auto log TensorboardX events to Comet

Gradio

GUI model demos directly in the Comet UI

New Relic

Full-stack ML observability

GitLab

CI/CD for ML workflows

Catalyst

Leverage accelerated, PyTorch-based deep learning training pipelines

Spark NLP

Production-grade, state-of-the-art NLP

Sweetviz

Automatic EDA tracking

Aquarium Learn

Exploring and improving datasets through robust data management

Comet Community

Last but certainly not least, 2021 was a banner year for the Comet Community—tons of new educational content, a brand new contributor program, sponsorship of two industry-leading outlets, a number of really exciting events…

Oh, and, as always, Comet remains completely free for community practitioners and academics!

Heartbeat: Community Publication and Contributor Program

In October, we officially acquired and (re)launched Heartbeat, a community-driven publication that, since 2017, has been at the forefront of technical content serving data science, ML, and DL practitioners.

Heartbeat’s stature among industry publications, combined with its dedication to supporting practitioners and writers from around the world, made it a natural fit for the Comet community.

Since that launch in early October, we’ve already been fortunate enough to publish more than 50 technical articles from more than 2 dozen technical contributors around the world.

We’re committed to the legacy and future of the Heartbeat publication as a premiere source of technical content that speaks to the needs, interests, and aspirations of the ML practitioner community—that’s why we’re paying contributors for their articles, as well as providing full editorial support along the way.

Interested in contributing? We’d love to hear from you! Learn more in our official Call for Contributors.

Deep Learning Weekly: Industry-Leading Newsletter

In addition to Heartbeat, we also became the lead sponsors of Deep Learning Weekly, one of the premiere weekly newsletters in the industry, covering the best and most essential industry news, research, code examples, learning resources, and more across the ML and DL world.

With more than 225 published issues and recently eclipsing 16,000 subscribers, we’re excited to carry on the excellent legacy of DLW—and help make it even better!

Click here to subscribe for free and read the entire archive!

Comet Industry Events

With such amazing customers, community members, and networks, we take seriously our responsibility to share that wealth of knowledge with the broader ML community.

One way we did that in 2021 was through a number of really interesting and fun events (all virtual, unfortunately) that explored some of the most interesting and relevant industry topics, ranging from deeply technical looks at ML system design to strategies and tools for collaboration within ML workflows.

Here’s a rundown of some of our favorite events from 2021:

Comet Roundtable: ML Development at Scale in the Enterprise

This virtual roundtable from early December featured a wide-ranging discussion with ML leaders from Uber AI, The RealReal, and WorkFusion, exploring the past year of their ML work in the enterprise. We covered:

- Key challenges faced by enterprise teams and how they are overcoming them

- Helpful tools and approaches for building and deploying ML at scale

- Surprising discoveries that have changed the way enterprise teams build AI

This was an incredibly dynamic session with tons of valuable insights from some of the biggest and best ML teams in the world. ICYMI, you can access the full session on-demand here, as well as review some of the best highlights on this YouTube Playlist.

Stanford MLSys Seminar: MLOps System Design

In late October, Comet CEO and co-founder Gideon Mendels joined the well-regarded Stanford MLSys Seminar series to discuss how some of the industry leaders are approaching MLOps system design—all the way from creating an initial baseline to creating systems to monitor and iterate on models once they’re in production.

Essentially, a deeply technical and thorough examination of the development-to-production feedback loop.

You can check out the full session here.

Lessons from the Field in Building Your MLOps Strategy

Comet Data Scientist Harpreet Sahota—new to the Comet team in 2021—jumped right into the deep end with his excellent presentation centered on some of the lessons our team has learned about building and executing an MLOps strategy. This presentation focuses on when, why, and how growing ML teams should think about hiring, building, and buying their MLOps approach.

While Harpreet delivered this talk numerous times, here’s the most recent version from early November, delivered in partnership with Neuton AI.

Industry Q&A: Building Creative User Interfaces and Experiences with ML

In this Industry Q&A, we explored the intersection of user interfaces and experiences powered by machine learning. To help us dive into this topic, our team spoke with Hart Woolery, CEO and Founder of 2020CV, and Victor Dibia, Principal Research Engineer at Cloudera Fast Forward Labs.

Industry Q&A: Collaborative Data Science and Machine Learning

In August, we sat down with Jakub Jurovych of Deepnote and Abubakar Abid of Gradio to chat about all things collaboration in the fields of data science and machine learning. We covered everything from the need for more collaborative tools and processes all the way to words of wisdom for aspiring DS/ML practitioners.

Looking Ahead to 2022

If you’ve made it this far, then you’ve probably realized we had a busy and exciting 2021.

That said, we’re pumped to create, innovate, and share more of what we’re working on and learning in 2022. You can expect many more industry- and practitioner-focused events, a lot (a lot) more content about the burgeoning MLOps / production-level ML space, a variety of Comet product innovations…and some new surprises we can’t reveal just yet

We hope to see you all in 2022. Happy New Year, from the entire Comet team!